The Next Evolution of Board Game Tiles

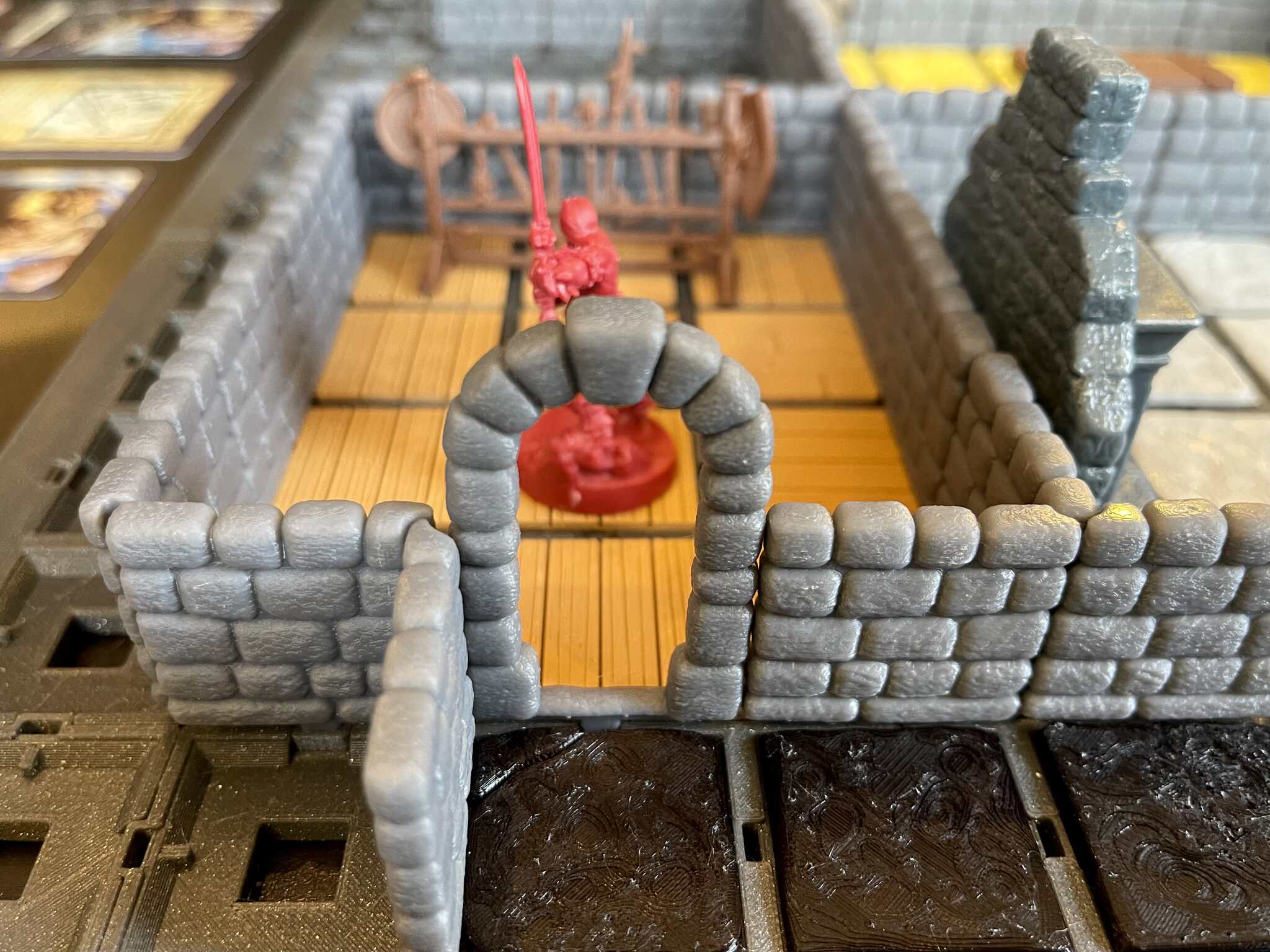

Dynamod creates 3D printable dungeon tile systems that are highly modular with dynamically changing parts, making table top gaming more engaging than ever before.

[ Join the Forums ] [ Chat on Discord ]

Our first focus is square dungeon tiles with terrain, walls, doors, traps and much more. We currently have all the parts to recreate and enhance the traditional HeroQuest game system. This also provides an excellent system to create Dungeons and Dragons maps.

When you become a Patron, you immediately get access to ALL of the STL files in this collection. New models become available to you multiple times throughout each month.

|

|

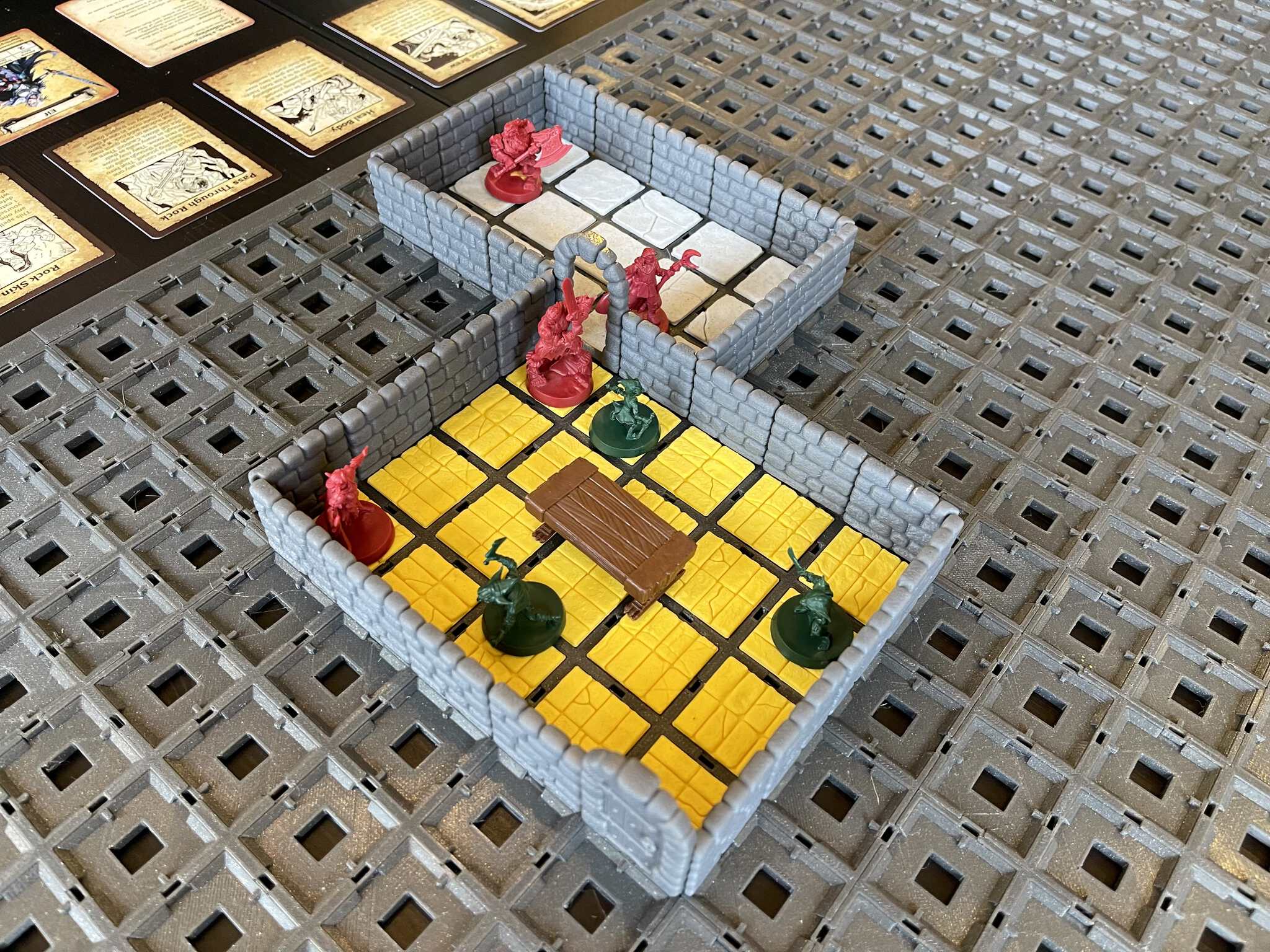

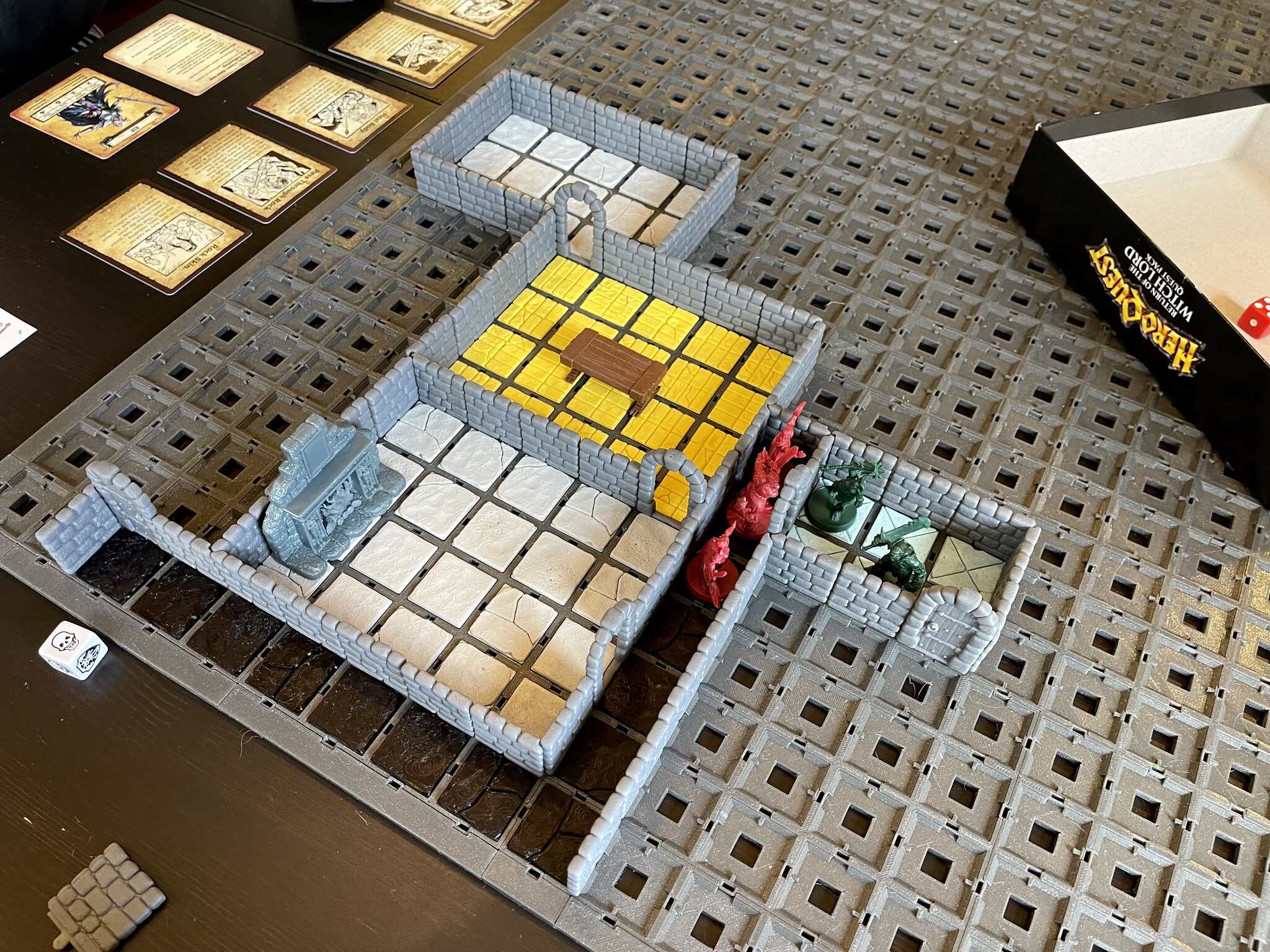

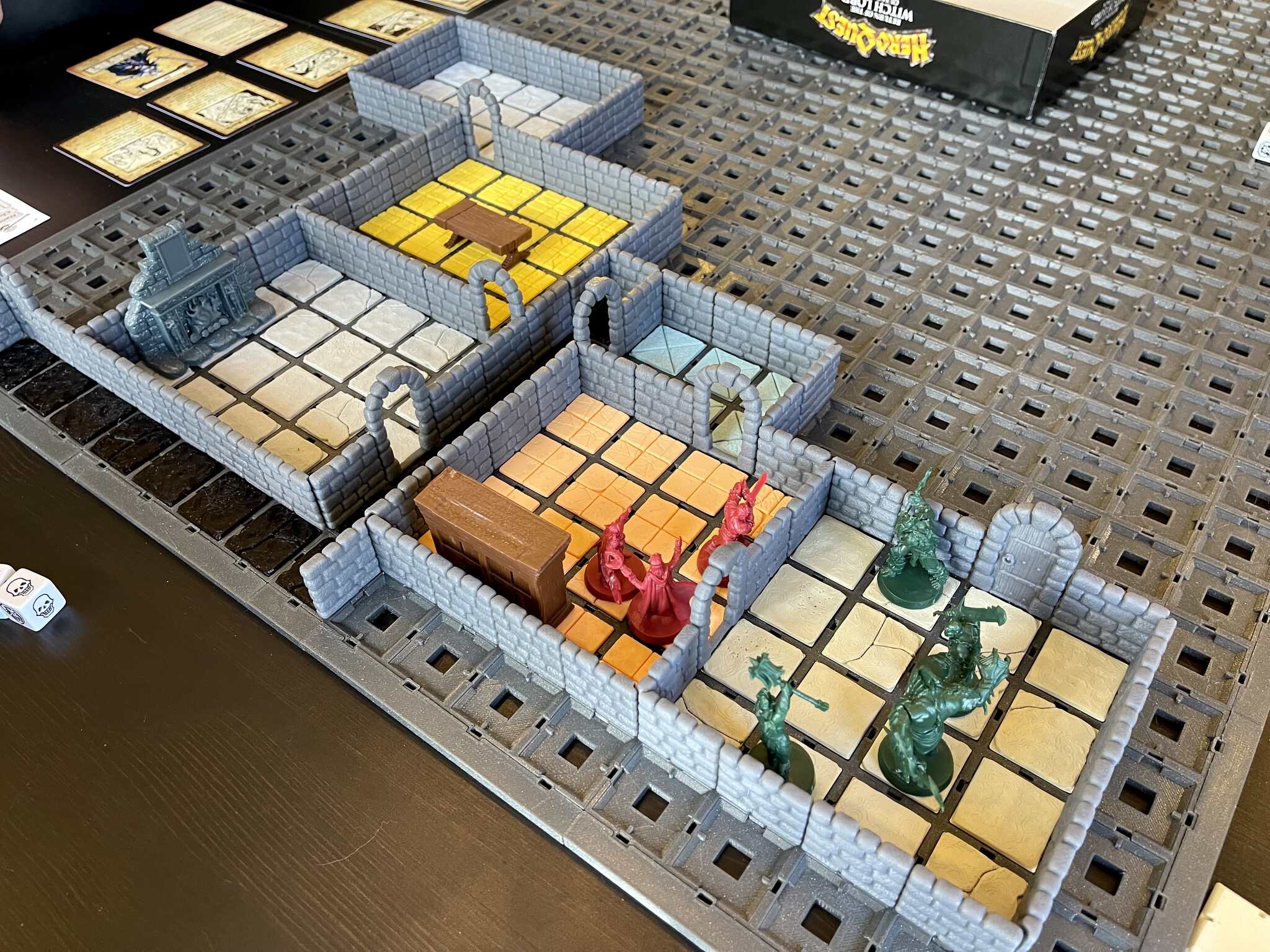

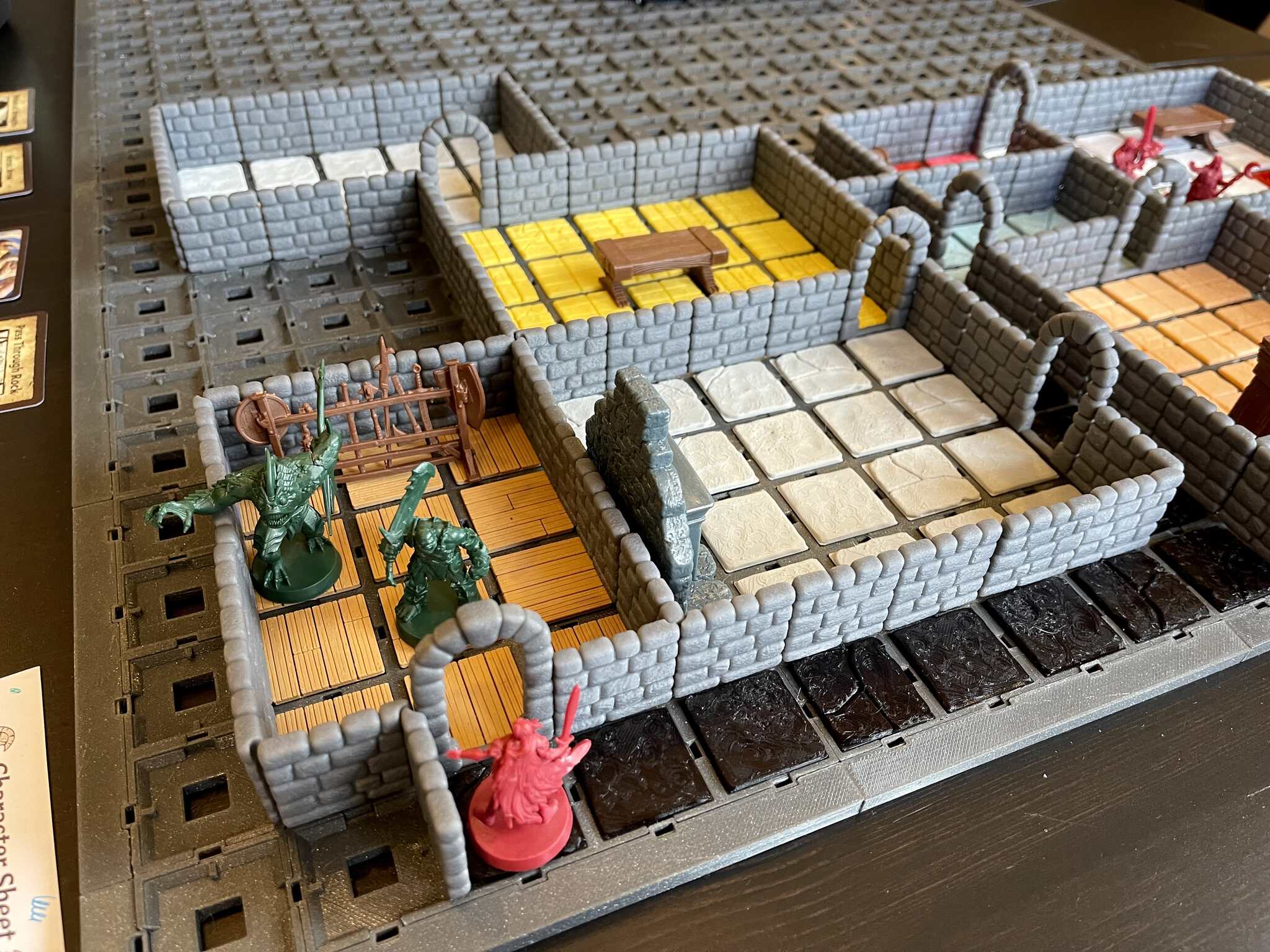

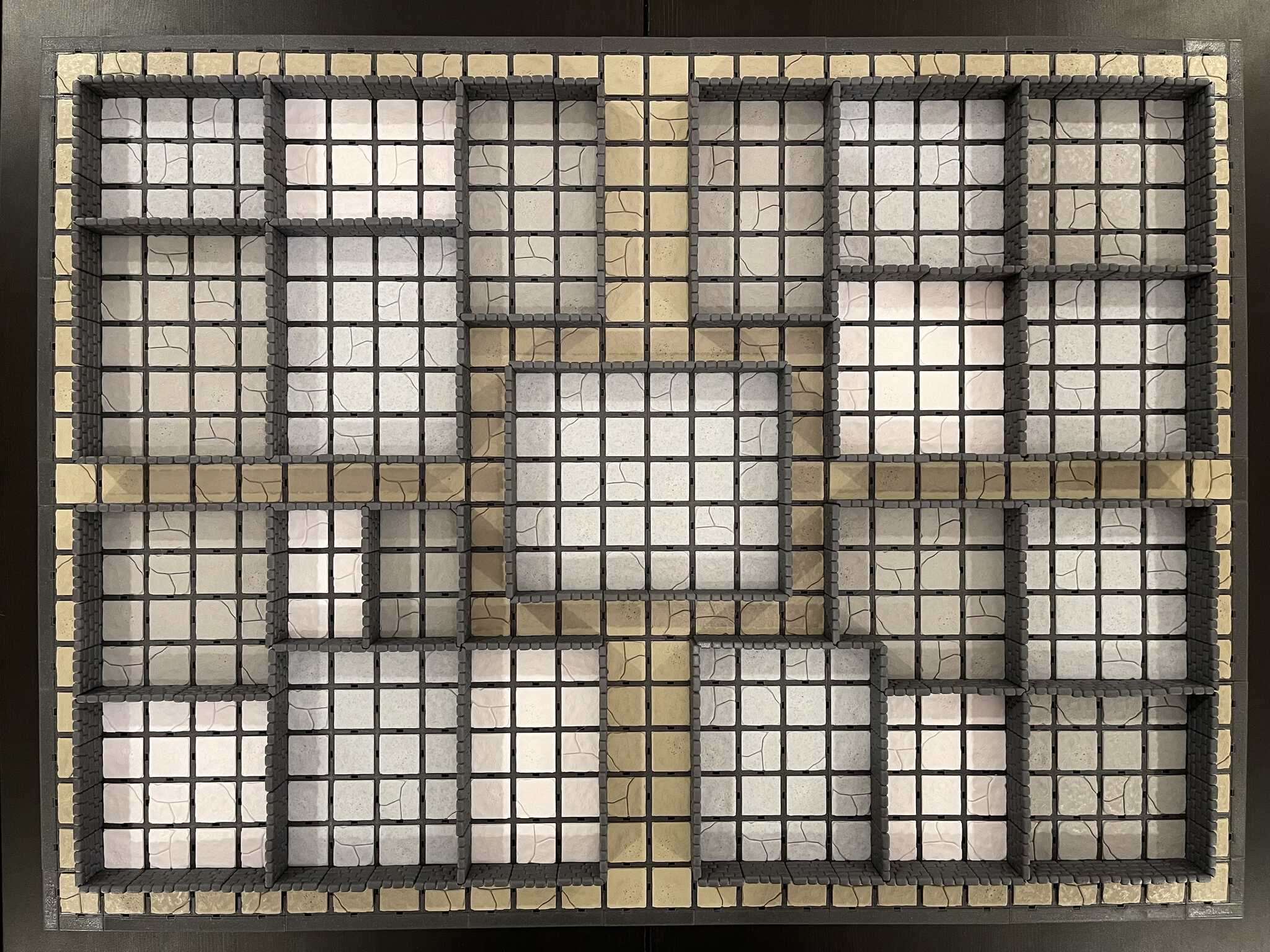

Gameplay Gallery

Below are a few pictures of example configurations and gameplay sessions.

Dynamod allows you to create any square grid layout you can imagine. You're only limited by the amount of individual parts you 3D print or purchase.

Features

The Dynamod dungeon tile system offers the following exciting and engaging features:

- Perfect grids without any wall offsets, making it possible to recreate any existing map grid

- Dynamically switchable walls, doors and terrain provide full fog of war mechanics to your board game

- Walls and doors slide in from the top and can be changed by simply lifting them up and putting new ones in without clip detachment, this makes it very easy to change closed doors into open doors or walls into secret doors

- Walls sit in the middle of two tiles, sharing the space and offering full square inch playing space everywhere without oversized terrain.

- The tiles are 32x32mm and the terrain 28x28mm.

- All your existing scenery feels right at home from square inch-based games, like the HeroQuest reissue

- Fast and flexible tile assembly with three choices that work for any gameplay style:

- The tiles are stable without clips for quick gameplay and have a slight snap and an alignment feature to prevent them from freely sliding around

- The tiles can hold optional magnets directly without the need for clips

- The tiles can use optional sturdy clips for more permanent arrangement without feeling loose or wiggly

- Tiles are a structural base in which the terrain snaps in from above, this allows for tile reuse or even for switching terrain during gameplay, the floor can for instance become a pit trap

- Filament painted terrain versions, offering detailed color that can be printed with any FDM printer

- Pre-configured larger rooms with multiple tiles for even quicker setup

- Assembly is fully mechanical and requires no glue

- Fast print times and conservative material usage

- Perfect integration into 3D printed StageTop board game table

- Designed both for FDM and resin printing

- Full set of remix tools to adapt existing STL models you already have

- Detailed print instructions for PrusaSlicer, Cura, IdeaMaker and Simplify3D

- All models have been printed and tested on multiple FDM and resin printers from different brands

|

|